This website has been improved and the new version is found under its new address:

I welcome all readers to the new site.

Hansruedi Straub

This website has been improved and the new version is found under its new address:

I welcome all readers to the new site.

Hansruedi Straub

Have you ever wondered why the musical scales in all musical cultures, whether in the jungle, in the concert hall or in the football stadium, span precisely an octave? Or why children without any musical education the world over quite spontaneously find the major triad “beautiful”?

The explanation lies in the resonances. No matter how different musical cultures are, they still have a common core. This consists of the resonances which emerge between the notes of the musical scales and chords.

Classical music theory is aware of the fact that there are mathematical fractions in the intervals, but it fails to explain how these fractions come into being and what they have to do with our perception of music.

The fractions represent the mathematics, but behind the mathematics, there are physical reasons. As is known, sound waves are able to reinforce or weaken each other. These physical compatibilities can be calculated if we compare the pitches of the notes involved. A simple rule explains how two notes start to resonate and what happens when three notes or more are involved. In this way, many peculiarities of the various musical scales and chords can be explained.

All these questions can be answered with the physics of sound waves.

How does the connection between mathematics and physics come about in harmony? To find out, we have a look at the most important characteristic of a note, namely its frequency, which determines its pitch. Next, we look at how an interval which is constituted by two notes can be defined as a mathematical fraction.

In physical terms, a note is a sound wave. A sound wave is a vibration in the air or in an object (such as a string), whose most important characteristic is its frequency, i.e. the number of vibrations per second. Thus, the standard pitch a’ has a frequency of 440Hz, so the standard pitch vibrates to and fro 440 times per second – on the string, in the air and in the inner ear.

When two notes sound at the same time, they interrelate in an interval. Depending on how their frequencies interrelate, they mingle in a quieter or tenser manner. This is an effect that we perceive with our hearing. The intervals are calculated from the ratio of the frequencies to each other. In mathematical terms, this ratio is a fraction f1 / f2, i.e. the frequency of the higher note is divided by the frequency of the lower note.

The calculation of intervals is very simple.

This knowledge enables us to explain our musical scales and chords in simple and consistent terms. And if the above calculations have scared you off because after all, you are a musician and not a mathematician or an accountant, I can reassure you: more mathematics than that will not be necessary at all. These are simple fractions with very small figures the way you learnt it in primary school.

The resonances have a great deal to do with these intervals and the fractions that characterise them.

The three sample calculations a) to c) for intervals already characterise the three basic physical degrees of resonance:

The distinction between three degrees as described above takes us further towards our goal of explaining the different musical scales:

Conventional harmony is based on these overtones, but there is a problem: the higher the overtone, the weaker the natural resonance. However, the higher overtone would be required to provide a physical reason for musical scales. In this instance, the conventional explanation of the musical scales fails. → The series of overtones is not a musical scale

It is this resonance of the 3rd degree that is responsible for our musical scales and chords. It explains which intervals are particularly resonant, how several tones mix and how the characteristics of the musical scales and chords that we can perceive are generated. More about this in the following posts.

This is a post in the category musical scales

Translation: Tony Häfliger, Vivien Blandford

(continues “Self-reference 1“)

The trick with which classical logical systems can be invalidated consists of two instructions:

1: A statement refers to itself.

2: The reference or the statement contains a negation.

This constellation always results in a paradox.

A famous example of a paradox is the barber who shaves all the men in the village, except of course those who shave themselves (they don’t need the barber). The formal paradox arises from the question of whether the barber shaves himself. If he does, he’s one of those men who shave themselves and, as the statement about the barber says, he doesn’t shave those men. So he doesn’t shave himself. Therefore, he is one of the men who don’t shave themselves – and those men he shaves.

In this way, the truth of the statement, whether or not he shaves himself, constantly changes back and forth between TRUE and FALSE. This oscillation is typical of all genuine paradoxes, such as the lying Cretan or the formal proof in Gödel’s incompleteness theorem, where the truth of a statement oscillates continuously between true and false and thus cannot be determined. In addition to the typical oscillation, the barber example also clearly shows the two conditions for the true paradox mentioned above:

1. Self-reference: Does he shave HIMSELF?

2. Negation: He does NOT shave men who shave themselves.

At this point, reference can be made to Spencer-Brown, who developed a calculation that clearly demonstrates these relationships. The calculation is presented in his famous book ‘Laws of Form’. Felix Lau explained the ideas to us laymen in his book ‘Die Form der Paradoxie’.

The ‘classical’ and true paradoxes can be juxtaposed with ‘false’ paradoxes. A good example is the ‘paradox’ of Achilles and the tortoise. This false paradox does not contain a genuine logical problem, as in the case of the barber, but is based on an inappropriately chosen model which leads to Zenon’s surprising, but wrong conclusion. The times the two competitors take and the distances they run get shorter and shorter and thus approach a value that cannot be exceeded within the selected (wrong) model. This means that Achilles cannot overtake the tortoise in the model. In reality, however, there is no reason for the times and distances to be distorted in such a way.

The impossibility of overtaking the tortoise only exists in the model, which has been incorrectly selected in an ingenious way. A measurement system that distorts things in this way is of course not appropriate. In reality, it is only a duplicitous choice of model, and not a real paradox. Accordingly, the two criteria for genuine paradoxes are not present.

The example of Achilles and the tortoise shows the importance of a correct choice of model. The choice of model always takes place outside the representation of the solution and is not the subject of a logical proof. Rather, the choice of model has to do with the relationship of logic to reality. It takes place on a superordinate meta-level.

I postulate that the field of logic should necessarily include the choice of model and not just the calculation within the model. How do we choose a model? If logic is the study of correct thinking, then this question must also be addressed by logic.

The interaction of two levels, namely an observed level and a superordinate, observing meta-level, plays a necessary role in the choice of model, which always takes place on the meta-level which observes the lower level.

The interaction between the two levels can be observed, too. This is exactely the case in the true paradoxes, e.g. in the barber paradox. The self-reference in the true paradox inevitably introduces the two levels. This happens because an observed statement refers to itself and thus exists twice, once on the level under consideration, on which it is the ‘object’, as it were, and secondly on the meta-level, on which it refers to itself. The oscillation in the paradox arises through a ‘loop’, i.e. through a circular process between the two levels from which the logical system cannot escape – and it is oscillates because the negation makes it twist at each turn.

Incidentally, there are two types of selfreferential logical loops, as Spencer-Brown and Lau point out:

In other words: self-reference in logical systems is always dangerous!

In order to correctly handle paradoxes in logical systems, it is worth introducing a ‘meta-leap’ – a relationship between the observed level and the meta-level.

The acceptance of the two levels and their relation is crucial for understanding the relationship between reality and formal logic.

Self-reference causes classical logical systems such as FOL to break down.

See also: Paradoxes and Logic

More on the topic of logic -> Logic overview page

Translation: Juan Utzinger, Vivien Blandford

In the 1980s, I read Douglas Hofstadter’s cult book ‘Gödel-Escher-Bach’ with fascination. Central to it is Gödel’s incompleteness theorem. This theorem shows the limit of classical mathematical logic. Gödel proved this limit in 1931 in conjunction with the fact that it is insurmountable for all classical mathematical systems as a matter of principle.

This is quite astonishing! Is mathematics imperfect? As inheritors of the Age of Enlightenment and convinced disciples of rationality, we consider nothing to be more stable and certain than mathematics.

Hofstadter’s book impressed me. However, at certain points, for instance on the subject of the ‘coding’ of information, I had the impression that certain aspects were greatly simplified by the author. In my opinion, the way in which information is incorporated into an interpreting system plays a major role in the recognition process in which information is picked up. The integrating system is itself active and participates in the decision-making process. Information is not exactly the same before and after integration. Does the interpreter, i.e. the receiving (coding) system, have no influence here? And if it does have any influence, what is that influence?

In addition, the aspect of ‘time’ did not seem to me to be sufficiently taken into account. In the real world, information processing always takes place within a certain period of time. There is a before and an after. A receiving system is also changed by this. In my opinion, time and information are inextricably linked. Hofstadter seemed to miss something here.

My reception of Hofstadter was further challenged by Hofstadter’s positioning as a representative of ‘strong AI’. The ‘strong AI’ hypothesis states that human thinking, indeed human consciousness, can be simulated by computers on the basis of mathematical logic, a hypothesis that seemed – and still seems – rather daring to me.

Roger Penrose is said to have been provoked into writing his book ‘Emperor’s New Mind’ by a BBC programme in which Hofstadter, Dennett and others enthusiastically advocated the strong AI thesis, which Penrose obviously does not share. As I said, neither do I.

But of course, front lines are never that simple. Although I am certainly not on the side of strong AI, Hofstadter’s presentation of Gödel’s incompleteness theorem as a central insight of 20th century science remains unforgettable for me. I also read with enthusiasm the interview with Hofstadter that appeared in Spiegel (DER SPIEGEL 18/2014: ‘Language is everything’). In it, he postulates, among other things, that analogies are decisive in scientists’ thinking and he differentiates his interests from those of the profit-oriented IT industry. These are thoughts that one might very well endorse.

But let’s go back to Gödel. What – in layman’s terms – is the trick in Gödel’s incompleteness theorem?

The trick is the same as in the barbar paradox and all other real paradoxes. The trick is to make a sentence, a logical statement and …

1. to refer it to itself (selfreference)

2. and then to deny it. (negation)

That’s the whole trick. With this combination, any classic formal system can be invalidated.

I’m afraid I need to explain this in more detail …

→ “Self-reference 2“

Self-referentiality causes classical logical systems such as FOL or Boolean algebra to break down.

More on the topic of logic → Overview Logic page

German original (2015): Selbstreferenz

Translation; Juan Utzinger, Vivien Blandford

Littering in space had been a concern long before Elon Musk’s Starlink programme, and various methods for cleaning up the growing clutter in Earth’s orbit are currently under discussion. The task is not easy because – due to the second law, the inevitable increase in entropy – all littering tends to increase exponentially. If one of the thousands of pieces of scrap metal in space is hit by another piece of scrap metal, the one piece that was hit creates many new pieces that fly around at insane speeds. Space pollution is therefore a self-perpetuating phenomenon with an increasingly exponential tendency.

But haven’t we known about this problem for a long time? The Polish writer Stanislaw Lem had already written about it in the 1960s when he wrote his science fiction story “Star Diaries” about the travels of a certain cosmonaut called Ijon Tichy. In his 21st voyage, Tichy lands on a planet which had survived complete littering by satellites. The well-travelled cosmonaut writes:

“Every civilisation that is in the technical phase gradually begins to sink into the waste, causing enormous worries.”

Tichy goes on to describe how waste is therefore disposed of in space around the planet. This, however, causes new problems there, with consequences that also became apparent to cosmonaut Tichy.

The 21st journey, however, has something else to offer. The main theme of Tichy’s 21st journey – as in many of Stanislaw Lem’s stories – is artificial intelligence.

On the now purified planet, Tichy encounters not only another unpleasant consequence of the second law (namely degenerate biogenetics), but also an order of monks consisting of robots. These robots discuss the conditions and consequences of their artificial intelligence with Tichy. For example, the robot prior says about the conclusiveness of algorithms:

“Logic is a tool“ replied the prior, “and nothing results from a tool. It must have a shaft and a guiding hand.” (Lem 2071, p. 272)

Without realising the connection, I followed in the footsteps of Lem’s robot prior and wrote about AI in 2021:

“An entity (intelligence) […] needs to establish a relationship between the data and the objective of the analysis in order to interpret the data. This task is always linked to an entity with a specific intent..” (Straub 2021, p. 64-65)

50 years ago, Stanislaw Lem had already formulated what I believe to be the fundamental difference between the intelligence of a tool and an animate (i.e. biological) intelligence – namely the intent that guides the logic. The intent cannot be set by a machine itself, but must be provided from outside by the creators, by the “guiding hands” of the machine. The creators can do this in various ways, e.g. by selecting the training data, or by shaping the AI algorithms in the most desired direction. In other words: The intelligence of the AI is formed from outside.

Human intelligence, on the other hand, can – especially if we don’t want to be robots – determine its own goals. In the words of Lem’s prior, it consists not only of the logic that is guided by the guiding hand, but also includes the guiding hand itself.

As a consequence of this consideration, one can draw the following conclusion about AI:

If we make use of the technical possibilities of AI (and why shouldn’t we?), then we should always take into account the aim of our algorithms.

I think this is just what the robot intelligence of Lem’s prior wanted to say to Tichy.

Translation: Juan Utzinger, Vivien Blandford

This is a post on Artificial Intelligence

Literature

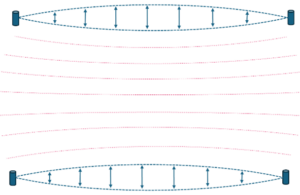

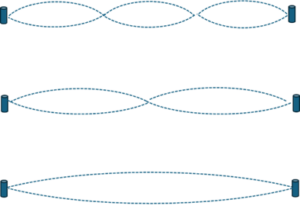

Resonance is always based on the natural oscillations of two physical objects and their mutual coupling

Natural vibrations are standing waves whose frequency is determined by the properties of the physical medium (size, shape, material, etc.).

Two such media can enter into resonance through their oscillations. The resonance is created by coupling the two oscillations so that the two physical media form a coupled unit in their oscillating behaviour.

The coupling takes place through a physical exchange of energy, either directly or indirectly, e.g. through the air. The prerequisite for the coupling to occur is that the frequencies of the natural vibrations of the two physical media involved are in an appropriate mathematical ratio to each other.

Once the resonance state has been established, it remains stable for a certain period of time, i.e. the coupled oscillation state remains stationary, often over a longer time period. This astonishing behaviour has to do with the energy situation, which is particularly favourable when the two objects are coupled.

The internal oscillation of an individual physical object can also be described as resonance. For example, an electron around the nucleus of an atom resonates with itself during its cycles and can therefore only swing into very specific orbital frequencies that allow it to resonate with itself on its orbital trajectory. This leads to the specific frequency of the natural oscillation of the electron in the atom. The same applies to the vibrational behaviour of a string of a musical instrument.

Although the physical material determines the inherent frequency of the vibrating object, the frequency of the coupled resonance, however, results from the appropriate ratio of the natural frequencies of the two media involved. The frequency of the evolving resonance follows mathematical rules. Mathematics is abstract, and amazingly simple abstract mathematical rules are enough to discern which frequencies will generate resonances and how strong the resonance between the two vibrating physical media will be.

Once the internal oscillations of the involved objects are set, it is only the abstract mathematical ratio of the two frequencies which allows the development of a resonance and determines its strength.

The emergence of resonance impressively demonstrates the interplay between two of the Three Worlds that, according to Nobel Prize winner Roger Penrose, form our reality. Roger Penrose names these two worlds the physical and the platonic. The term platonic refers to the abstract world of ideas, to which mathematics belongs. By using this term for the world of mathematics, Sir Roger refers to European cultural history. Here, the discussion about the reality of ideas is not only part of Plato’s philosophy, but also characterises large parts of the philosophical discourse in the Middle Ages that was known as the problem of universals.

The issue has lost none of its relevance since then: How real are ideas? Why does the abstract prevail in the material world? What is the relationship between abstract ideas and the concrete, i.e. physical world?

In the year 2020, I realised that the emergence of resonance in music would be a good example to explore the relationship between physics, maths and the third world, i.e. our subjective perception. I was surprised at how amazingly clear the relationship between the three worlds can be presented here and how astonishingly simple, logical and far-reaching the maths in the harmonies of our music is.

In a piece of music, the resulting resonances between the notes change again and again, creating a fascinating change in tone colour. We can experience it intuitively, but we can also explain it rationally, as a play of resonances between the notes.

At school I learnt that the overtone series determines our scales. But that is a gross simplification. The phenomenon of resonance can explain our scales much more simply and directly than can the overtone series on its own. The overtone series only describes the vibration behaviour within one physical medium – the resonance in music, however, always arises between at least two different media (tones). For the considerations regarding the resonance of two tones, we must consequently also compare two overtone series. Only the juxtaposition of the two series explains what is happening – a fact that is usually ignored in textbooks.

Chords consist of three or more tones. Here too, the resonance analysis of the three or more tones in question can explain the chord effect with astonishing simplicity. Only this time, it is not the frequencies of two but of a number of tones that have to be taken into account simultaneously.

In Europe, equal temperament tuning became established in the Baroque period, which expanded the compositional possibilities in many ways. The first thing the layman finds on the theory of scales is therefore a precise description of the deviations of tempered tuning from pure tuning – but these deviations are only of marginal importance for the development of resonances. Pure tuning is not a condition for resonance; the mathematics of resonance presented here explains the phenomenon even with tempered tuning.

This is a contribution to Penrose’s Three-Worlds Theory and the Origin of Scales.

Translation: Juan Utzinger, Vivien Blandford

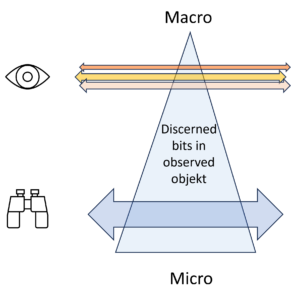

The conventional physical definition of entropy characterises it as a difference between two levels: a detail level and an overview level.

The thermal entropy according to Boltzmann is classic, using the example of an ideal gas. The temperature (1 value) is directly linked to the kinetic energies of the individual gas molecules (1023 values). With certain adjustments, this applies to any material object, e.g. also to a coffee cup:

The values of a) and b) are directly connected. The heat energy of the liquid, which is expressed in the temperature of the coffee, is made up of the kinetic energies of the many (~ 1023) individual molecules in the liquid. The faster the molecules move, the hotter the coffee.

The movement of the individual molecules b) is not constant, however. Rather, the molecules are constantly colliding, changing their speed and therefore their energy. Nevertheless, the total energy after each collision is the same. Because of the energy theorem, the energy of the molecules involved changes with each collision, but the energy of all the molecules involved together remains the same. Even if the coffee cools down slowly or if the liquid is heated from the outside, the interdependence is maintained: The single overall value (temperature) and the many detailed values (movements) are always interdependent.

The well-known proverb warns us not to see the wood for the trees. This is an helpful picture for the tension between micro and macro level.

Forest: macro level

Trees: micro level

On the micro level we see the details, on the macro level we recognise the big picture. So which view is better? The forest or the trees?

We generally believe that it is better to know all the details. But this is a delusion. We always need an overview. Otherwise we would get lost in the details.

We can now enumerate all the details of the micro view and thus obtain the information content – e.g. in bits – of the micro state. In the macro state, however, we have a much smaller amount of bits. The difference between the two amounts is the entropy, namely the information that is present in the micro state (trees) but missing in the macro state (forest).

The information content at the micro level can be calculated in bits. Does this amount of bits correspond to entropy? If so, the information content at the macro level would simply be a loss of information. The actual information would then be in the micro level of details.

This is the spontaneous expectation that I repeatedly encounter with dialogue partners. They assume that there is an absolute information content, and in their eyes, this is naturally the one with the greatest amount of detail.

A problem with this conception is that the ‘deepest’ micro-level is not clearly defined. The trees are a lower level of information in relation to the forest – but this does not mean that the deepest level of detail has been reached. You can describe the trees in terms of their components – branches, twigs, leaves, roots, trunk, cells, etc. – which is undoubtedly a deeper level than just trees and would contain even more details. But even this level would not be deep enough. We still can go deeper into the details and describe the different cells of the tree, the organelles in the cells, the molecules in the organelles and so on. We would then arrive at the quantum level. But is that the deepest level? Perhaps, but that is not certain. And the further we go into the details, the further we move away from the description of the forest. What interests us is the description of the forest and the lowest level is not necessary for this. The deeper down we search, the further we move away from the description of our object.

→ The deepest micro level is not unequivocally defined!

We can therefore not assign a distinct absolute entropy for our object. Because the micro level can be set at any depth, the entropy, i.e. the quantitative information content at this level, also changes. the deeper, the more information, the higher the entropy.

Like the micro level, the highest information level, e.g., of a forest, is not clearly defined as well.

Is this macro level the image that represents an optical view of the forest as seen by a bird flying over it? Or is it the representation of the forest on a map? At what scale? 1:25,000 or 1:100,000? Obviously the amount of information of the respective macro state changes depending on the view.

What are we interested in when we describe the forest? The paths through the forest? The tree species? Are there deer and rabbits? How healthy is the forest?

In other words, the forest, like any object, can be described in very different ways.

There is no clear, absolute macro level. A different macro representation applies depending on the situation and requirements.

At each level, there is a quantitative amount of information, the deeper the richer, the higher the clearer. It would be a mistake, however, to label a specific level with its amount of information as the lowest or the highest. Both are arbitrary. They are not laid by the object, but by the observer.

As soon as we accept that both micro and macro levels can be set arbitrarily, we approach a more real concept of information. It suddenly makes sense to speak of a difference. The difference between the two levels define the span of knowledge.

The information that I can gain is the information that I lack at the macro level, but which I find at the micro level. The difference between the two levels in terms of their entropy is the information that I can gain in this process.

Conversely, if I have the details of the micro level in front of me and want to gain an overview, I have to simplify this information of the micro level and reduce its number of bits. This reduction is the entropy, i.e. the information that I consciously relinquish.

If I want to extract the information that interests me from a jumble of details, i.e. if I want to get from a detailed description to useful information, then I have to ignore a lot of information at the micro level. I have to lose information in order to get the information I want. This paradox underlies every analytical process.

What I am proposing is a relative concept of information. This does not correspond to the expectations of most people who have a static idea of the world. The world, however, is fundamentally dynamic. We live in this world – like all other living beings – as information-processing entities. The processing of information is an everyday process for all of us, for all biological entities, from plants to animals to humans.

The processing of information is an existential process for all living beings. This process always has a before and an after. Depending on this, we gain information when we analyse something in detail. And if we want to gain an overview or make a decision (!), then we have to simplify information. So we go from a macro-description to a micro-description and vice versa. Information is a dynamic quantity.

Entropy is the information that is missing at the macro level but can be found at the micro level.

And vice versa: entropy is the information that is present at the micro level but – to gain an overview – is ignored at the macro level.

We can assume that a certain object can be described at different levels. According to current scientific findings, it is uncertain whether a deepest level of description can be found, but this is ultimately irrelevant to our information theory considerations. In the same way, it does not make sense to speak of a highest macro level. The macro levels depend on the task at hand.

What is relevant, however, is the distance, i.e. the information that can be gained in the macro state when deeper details are integrated into the view, or when they are discarded for the sake of a better overview. In both cases, there is a difference between two levels of description.

The illustration above visualises the number of detected bits in an object. At the top of the macro level, there are few, at the bottom of the micro level there are many. The object remains the same whether many or few details are taken into account and recognised.

The macro view brings a few bits, but their selection is not determined by the object alone, but rather by the interest behind the view of the observer.

The number of bits, i.e. the entropy, decreases from bottom to top. The heigth of the level, however, is not a property of the object of observation, but a property of the observation itself. Depending on my intention, I see it the observed object differently, sometimes detailed and unclear, another time clear and simplified, i.e. sometimes with a lot of entropy and another time with less entropy.

Information acquisition is the dynamic process that either:

a) gains more details: Macro → Micro

b) gains more overview: Micro → Macro

In both cases, the amount of information (entropy as the amount of bits) is changed. The bits gained or lost correspond to the difference in entropy between the micro and macro levels.

When I examine the object, it reveals more or less information depending on how I look at it. Information is always relative to prior knowledge and must be understood dynamically.

Translation: Juan Utzinger

When we speak, we use words to describe the objects in our environment. With words, however, we do not possess the objects, but only describe them, and as we all know, words are not identical to the objects they describe. It is obvious that there is no identity.

Some funny examples of the not always logical use of words can be found in the following text (in German), which explains why the quiet plays loudly and the loud plays quietly.

Fig 1: The piano (the quiet one)

Fig. 2: The lute (the wood)

But how does the relationship between words and objects look if it is not an identity? It cannot be a 1:1 relationship, because we use the same word to describe different objects. Conversely, we can use several words for the same object. The relationship is not fixed either, because the same word can mean something different depending on the context. Words change relentlessly over time, they change their sound and their meaning.

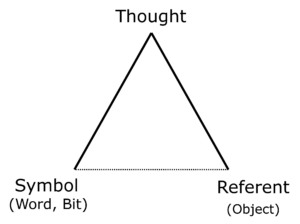

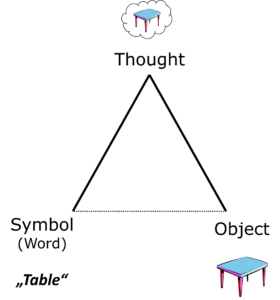

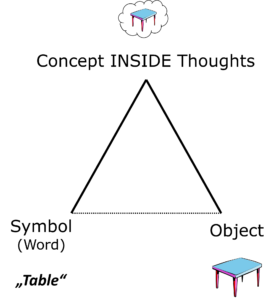

The relationship between words and designated objects is clearly illuminated by Ogden and Richards’1 famous depiction of the semiotic triangle from 1923.

Fig 3: The semiotic triangle according to Ogden and Richards1

Fig 4: The semiotic triangle exemplified by the word ‘table’

The idea of the triangle has many predecessors, including Gottlob Frege, Charles Peirce, Ferdinand de Saussure and Aristotle.

Ogden and Richards use the semiotic triangle to emphasise that we should not confuse words, objects and concepts. The three points of the triangle point to three areas that are completely different in nature.

The tricky thing is that we are not just tempted but entirely justified in treating the three points as if they were identical. We want the word to describe an object precisely. We want our concepts to correspond exactly with the words we use for them. Nevertheless, the words are not the objects, nor are they concepts.

Ogden and Richards have this to say: «Between the symbol [word] and the referent [object] there is no other relevant relationship than the indirect one, which consists in the fact that the symbol is used by someone [subject] to represent a referent.»

The relationship between the word (symbol, sign) and the object (referent) is always indirect and runs via someone’s thought, i.e. the word activates a mental concept in ‘someone’, i.e. a human subject, speaker or listener. This inside image is the concept.

Fig. 5: This is how Ogden and Richards see the indirect relationship between symbol and indicated object (referent). The thoughts contain the concepts.

The diminished baseline in Fig. 5 can also be found in the original of 1923. Symbol (word) and referent (reference object) are only indirectly connected via the thoughts in the interpreting subjects. This is where the mental concepts are found. The concepts are the basic elements of our thoughts.

When we deal with semantics, it is essential to take a look at the triangle. Only the concepts in our head connect the words with the objects. Any direct connection is an illusion.

This is a post on the topic of semantics.

Translation: Juan Utzinger

1 Ogden C.K. und Richards I.A. 1989 (1923): The Meaning of Meaning. Orlando: Harcourt.

The term entropy is often avoided because it contains a certain complexity that cannot be argued away.

But when we talk about information, we also have to talk about entropy. Because entropy is the measure of the amount of information. We cannot understand what information is without understanding what entropy is.

We believe that we can pack information, just as we store bits in a storage medium. Bits are then the information that is objectively available, like little beads in a chain that can say yes or no. For us, this is information. But this image is deceptive. We have become so accustomed to this picture that we can’t imagine otherwise.

Of course, the bit-beads do not say ‘yes’ or ‘no’, nor 0 or 1, nor ‘true’ or ‘false’, or anything else in particular. Bits have no meaning at all, unless you have defined this meaning from the outside. Then they can perfectly say 1, ‘true’, ‘I’m coming to dinner tonight’ or something else, but only together with their environment, their context.

This consideration makes it clear that information is relative. The bit only acquires its meaning from its placement relative to its context. Depending on its relative context, it means 0 or 1, ‘true’ or ‘false’, etc. The bit is set as a signal in its place, but its meaning only comes from its place.

The place and its relative context, must therefore be taken into account so that it becomes clear what the bit is supposed to mean. And of course, the meaning is relative, i.e. the same bit can have a completely different meaning in a different context, a different place.

This relativity characterises not only the bit, but every type of information. Every piece of information only acquires its meaning through the context in which it is placed. It is therefore relative. Bits are just signals. What they mean only becomes clear when you interpret the signals from your perspective, when you look at them from your context.

Only then does the signal take on a meaning for you. This meaning is not absolute, because whenever we try to isolate it from its context, it will be reduced to a mere signal. The meaning can only be found relatively in the interaction between your expectation, the context and the position of the bit. There it is a switch, which can be set to ON or OFF. However, ON and OFF only inform us about the position of the switch. Everything else is in the context.

Considering how important information and information technologies are today, it is astonishing how little is known about the scientific definition of entropy, i.e. information:

Entropy can be defined as a measure of the information that is

– known at the micro level

– but unknown at the macro level.

Entropy is therefore closely related to information at the micro and macro levels and can be seen as the ‘distance’ or difference between the information at the two information levels.

What is meant by this gap between the micro and macro levels? – When we look at an object, the micro level contains the details (i.e. a lot of information), and the macro level contains the overview (i.e. less, but more targeted information).

The distance between the two levels can be very small (as with the bit, where the microlevel knows just two pieces of information: on or off) or huge, as with the temperature (macrolevel) in a cup of coffee, for example, where the kinetic energies of the many molecules (microlevel) determine the temperature of the coffee. The number of molecules in this case lies in the order of Avogadro’s number 1023, i.e. quite high, and the entropy of the coffee in the cup is correspondingly high.

On the other hand, when the span between the micro and macro levels becomes very narrow, the information (entropy) will be small and comes very close to the size of a bit (information content = 1). However, it always depends on the relation between the micro and macro levels. This relation – i.e. what is known in the micro level but not in the macro level – defines the information that you receive, namely the information that a closer look at the details reveals.

A state at the macro level always contains less information than that in the micro state. The macro state is not complete, it never can contain all the information one could possibly get by a closer look, but in most cases it is a well targeted and intended simplification of the information at the micro level.

This means that the same micro-state can supply different macro-states. For example: a certain individual (micro level), can belong to the collective macro groups of Swiss inhabitants, computer scientists, older men, people who were alive in 2024, etc., all at the same time.

The possibility of simultaneously drawing out several macro-states from different micro-states is characteristic of the complexity of micro- and macro-states and thus also of entropy.

If we transfer the entropy consideration of thermodynamics to more complex networks, we must deal with their higher complexity, but the ideas of micro and macro state remain and help us to understand what is going on when information is gained and processed.

Translation: Juan Utzinger, Vivien Blandford

Continued in Entropy, Part 2

See also:

– Paradoxes and Logic, Part 2

– Georg Spencer-Brown’s Distinction and the Bit

– What is Entropy?

– Five Preconceptions about Entropy

The term entropy is often avoided because it contains a certain complexity. The phenomenon entropy, however, is constitutive for everything that is going on in our lives. A closer look is worth the effort.

Entropy is a measure of information and it is defined as:

Entropy is the information

– known at micro,

– but unknown at macro level.

The challenge of this definition is:

The micro level contains the details (i.e. a lot of information), the macro level contains the overview (i.e. less, but more targeted information). The distance between the two levels can be very small (as with the bit, where the microlevel knows just two pieces of information: on or off) or huge, as with the temperature (macrolevel) of the coffee in a coffee cup, where the kinetic energies of the many molecules (microlevel) determine the temperature of the coffee. The number of molecules in the cup is really large (in the order of Avogadro’s number 1023) and the entropy of the coffee in the cup is correspondingly high.

Entropy is thus defined by the two states and their difference. However, states and difference are neither constant nor absolute, but a question of observation, therefore relative.

Let’s take a closer look at what this relativity means for the macro level.

In many fields like biology, psychology, sociology, etc. and in art, it is obious to me as a layman, that the notion of the two levels is applicable to these fields, too. They are, of course, more complex than a coffee cup, so that the simple thermodynamic relationship between micro and macro becomes more complex.

In particular, it is conceivable to have a mixture of several macro-states occurring simultaneously. For example, an individual (micro level), may belong to the macro groups of the Swiss, the computer scientists, the older men, the contemporaries of 2024, etc – all at the same time. Therefore, applying entropy reasoning to sociology is not as straightforward as the simple examples like Boltzmann’s coffee cup, Salm’s lost key, or a basic bit might suggest.

Micro and macro level of an object both have their own entropy. But what really matters ist the difference of the two entropies. The bigger the difference, the more is unknown on the macro level about the micro level.

The difference between micro and macro level says a lot about the way we perceive information. In simple words: when we learn something new, information is moved from micro to macro state.

The conventional definition of entropy states that it represents the information present in the micro but absent in the macro state. This definition of entropy via the two states means that the much more detailed microstate is not primarily visible to the macrostate. This is exactly what Niklas Luhmann meant when he spoke of intransparency1.

When an observer interprets the incoming signals (micro level) at his macro level, he attempts to gain order or transparency from an intransparent multiplicity. How he does this is an exciting story. Order – a clear and simple macro state – is the aim in many places: In my home, when I tidy up the kitchen or the office. In every biological body, when it tries to maintain constant form and chemical ratios. In society, when unrest and tensions are a threat, in the brain, when the countless signals from the sensory organs have to be integrated in order to recognise the environment in a meaningful interpretation, and so on. Interpretation is always a simplification, a reduction of information = entropy reduction.

An essential point is that the information reduction from micro to macro state is always carried out by an active interpreter and guided by his interest.

The human body, e.g., controls the activity of the thyroid hormones via several control stages, which guarantee that the resulting state (macro state) of the activity of body and mind remains within an adequate range even in case of external disturbances.

The game of building up a macro state (order) out of the many details of a micro state is to be found everywhere in biology, sociology and in our everyday live.

There is – in all these examples – an active control system that steers the reduction of entropy in terms of the bigger picture. This control in the interpretation of the microstate is a remarkable phenomenon. Always when transparency is wanted, an information rich micro state must be simplified to a macro state with less details.

Entropy can then be measured as the difference in information from the micro to the macro level. When the observer interprets signals from the micro level, he creates transparency from intransparency.

We can now have a look at the entropy relations in the re-entry phenomenon as described by Spencer-Brown2. Because the re-entry ‘re-enters’ the same distinction that it has just identified, there is hardly any information difference between before and after the re-entry and therefore hardly any difference between its micro and macro state. After all, it is the same distinction.

However, there is a before and an after, which may oscillate, whereby its value becomes imaginary (this is precisely described in chapter 11 of Spencer-Browns book ‘Laws of Form’)2. Re-entries are very common in thinking and in complex fields like biology or sociology when actions and their consequences meet their own causes. These loops or re-entries are exciting, both in thought processes and in societal analysis.

The re-entries lead to loops in the interpretation process and in many situations these loops can have puzzling logical effects (see paradoxes1 sand paradoxes2 ). In chapter 11 of ‘Laws of Form’2, Spencer-Brown describes the mathematical and logical effects around the re-entry in details. In particular, he develops how logical oscillations occur due to the re-entry.

Entropy comes into play whenever descriptions of the same object occur simultaneously at different levels of detail, i.e. whenever an actor (e.g. a brain or the kitchen cleaner) wants to create order by organising an information-rich and intransparent microstate in such a way that a much simpler and easier to read macrostate develops.

We could say that the observer actively creates a new macro state from the micro state. However, the micro-state remains and still has the same amount of entropy as before. Only the macro state has less. When I comb my hair, all the hairs are still there, even if they are arranged differently. A macro state is created, but the information can still be described at the detailed micro level of all the hairs, albeit slightly altered in the arrangement on the macro level.

Re-entry – on the other hand – is a powerful logical pattern. For me, both re-entry and entropy complement each other in the description of reality. Distinction and re-entry are very elementary. Entropy, on the other hand, always arises when several things come together and their arrangement is altered or differentely interpreted.

See also:

Five preconceptions about entropy

Category: Entropy

Translation: Juan Utzinger

1 Niklas Luhmann, Die Kontrolle von Intransparenz, hrsg. von Dirk Baecker, Berlin: Suhrkamp 2017, S. 96-120

2 Georg Spencer Brown , Laws of Form, London 1969, (Bohmmeier, Leipzig, 2011)

continues paradoxes and logic (part 2)

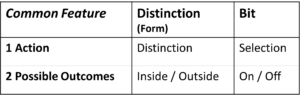

Before we Georg Spencer-Brown’s (GSB’s) distinction as basic element for logic, physics, biology and philosophy, it is helpful to compare it with another, much better-known basic form, namely the bit. This allows us to better understand the nature of GSB’s distinction and the revolutionary nature of his innovation.

Bits and GSB forms can both be regarded as basic building blocks for information processing. Software structures are technically based on bits, but the forms of GSB (‘draw a distinction’) are just as simple, fundamental and astonishingly similar. Nevertheless, there are characteristic differences.

Fig. 1: Form and bit show similarities and differences

Both the bit and the Spencer-Brown form were found in the early phase of computer science, so they are relatively new ideas. The bit was described by C. A. Shannon in 1948, the distinction by Georg Spencer-Brown (GSB) in his book ‘Laws of Form’ in 1969, only about 20 years later. 1969 fell in the heyday of the hippie movement and GSB was warmly welcomed Esalen, an intellectual hotspot and starting point of this movement. This may have put him – on the other hand – in a bad light and hindered the established scientific community to look closer into his ideas. While the handy bit vivified California’s nascent high-tech information movement, Spencer-Brown’s mathematical and logical revolution was rather ignored by the scientific community. It’s time to overcome this disparity.

Both the form and the bit refer to information. Both are elementary abstractions and can therefore be seen as basic building blocks of information.

This similarity reveals itself in the fact that both denote a single action step – albeit a different one – and both assign a maximally reduced number of results to this action, exactly two.

Table 1: Both Bit and Distinction each contain

one action and two possible results (outcomes)

The action of the distinction is – as name says – the distinction, and the action of the bit is the selection. Both actions can be seen as information actions and are as such fundamental, i.e. not further reducible. The bit does not contain further bits, the distinction does not contain further distinctions. Of course, there are other bits in the vicinity of the bit and other distinctions in the vicinity of a distinction. However, both actions are to be seen as fundamental information actions. Their fundamentality is emphasised by the smallest possible number of results, namely two. The number of results cannot be smaller, because a distinction of 1 is not a distinction and a selection of 1 is not a selection. Both are only possible if there are two potential results.

Both distinction and bit are thus indivisible acts of information of radical, non-increasable simplicity.

Nevertheless, they are not the same and are not interchangeable. They complement each other.

While the bit has seen a technical boom since 1948, its prerequisite, the distinction, has remained unmentioned in the background. It is all the more worthwhile to bring it to the foreground today and shed new light on what links mathematics, logic, the natural sciences and the humanities.

Both form and bit refer to information. In physics, the quantitative content of information is referred to as entropy.

At first glance, the information content when a bit is set or a distinction is made appears to be the same in both cases, namely the information that distinguishes between two states. This is clearly the case with a bit. As Shannon has shown, its information content is log2(2) = 1. Shannon called this dimensionless value 1 bit. The bit therefore contains – not surprisingly – the information of one bit, as defined by Shannon.

The bit measures nothing other than entropy. The term entropy originally came from thermodynamics and was used to calculate the behaviour of heat machines. Entropy is in thermodynamics the partner term of energy, but it applies – like the term energy – to all fields of physics, not just to thermodynamics.

Entropy is a measure for the information content. If I do not know something and then discover it, information flows. In a bit, there are – before I know which one is true – two states possible, the two states of the bit . When I find out which of the two states is true, I receive a small basic portion of information with the quantitative value of 1 bit.

One bit decides about two results. If more than two states are possible, the number of bits increases logarithmically with the number of possible states; so it takes three binary elections (bits) to find the correct choice out of 8 possibilities. The number of choices (bits) behaves logarithmically to the number of possible choices, as the example shows.

Dual choice = 1 Bit = log2(2).

Quadruple choice= 2 Bit = log2(4)

Octuple choice = 3 Bit = log2(8)

The information content of a single bit is always the information content of a single binary choice, i.e. log2(2) = 1.

The bit as a physical quantity is dimensionless, i.e. a pure number. This suits because the information about the choice is neutral, and not a length, a weight, an energy or a temperature. The bit serves well as the technical unit of quantitative information content. What is different with the other basic unit of information, the form of Spencer-Brown?

The information content of the bit is exactly 1 if the two outcomes of the selection have exactly the same probability. As soon as one of the two states is less probable, its choice reveals more information. When it is selected despite its lower prior probability, this makes more of a difference and reveals more information to us. The less probable its choice is, the greater the information will be, if it is selected. The classic bit is a special case in this regard: the probability of its two states is equal by definition and the information content of the choice is exactely 1.

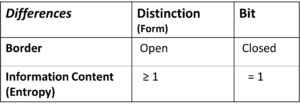

This is entirely different with Spencer-Brown’s form of distinction. The decisive factor lies in the ‘unmarked space’. The distinction distinguishes something from the rest and marks it. The rest, i.e. everything else, remains unmarked. Spencer-Brown calls it the ‘unmarked space’.

We can and must now assume that the remainder, the unmarked, is much greater, and the probability of its occurrence is much higher than the probability that the marked will occur. The information content of the mark, i.e. of the drawing the distinction, is therefore usually greater than 1.

Of course, the distinction is about the marked and the marked is what interests us. That is why the information content of the distinction is calculated based on the marked and not the unmarked.

How large is the space of the unmarked? We would do well to assume that it is infinite. I can never know what I don’t know.

The difference in information content, measured as entropy, is the first difference we can see between bit and distinction. The information content of the bit, i.e. its entropy, is exactly 1. In the case of distiction, it depends on how large the unmarked space is, but it is always larger than the marked space and the entropy of the distinction is therefore always greater than 1.

Fig. 1 above shows the most important difference between distinction and bit, namely their external boundaries. These are clearly defined in the case of the bit.

The bit contains two states, one of which is activated, the other not. Apart from these two states, nothing can be seen in the bit and all other information is outside the bit. Not even the meanings of the two states are defined. They can mean 0 and 1, true and false, positive and negative or any other pair that is mutually exclusive. The bit itself does not contain these meanings, only the information as to which of the two predefined states was selected. The meaning of the two states is regulated outside the bit and assigned from outside. This neutrality of the bit is its strength. It can take on any meaning and can therefore be used anywhere where information is technically processed.

The situation is completely different with distinction. Here the meaning is marked. To do this, the inside of the distinction is distinguished from the outside. The outside, however, is open and there is nothing that does not belong to it. The ‘unmarked space’, in principle, is infinite. A boundary is defined, but it is the distinction itself. That is why the distinction cannot really separate itself from the outside, unlike the bit. In other words: The bit is closed, the distinction is not.

There are two essential differences between distionction and bit.

Table 2: Differences between Distinction (Form) and Bit

The two difference between distinction and bit have some interesting consequences.

The bit, due to its defined and simple entropy and its close borders, has the technological advantage of simple usability, which we exploit in the software industry. Distinctions, on the other hand, are more realistic due to their openness. For our specific task of interpreting medical texts, we therefore came across the need to introduce openness into the bit world of technical software through certain principles: The keywords here are

Translation: Juan Utzinger

continues Paradoxes and Logic (part 1)

Spencer-Brown introduces the elementary building block of his formal logic with the words ‘Draw a Distinction’. Figure 1 shows this very simple formal element:

Fig 1: The form of Spencer-Brown

In fact, his logic consists exclusively of this building block. Spencer-Brown has thus achieved an extreme abstraction that is more abstract than anything mathematicians and logicians have found so far.

What is the meaning of this form? Spencer-Brown is aiming at an elementary process, namely the ‘drawing of a distinction’. This elementary process now divides the world into two parts, namely the part that lies within the distinction and the part outside.

Fig. 2: Visualisation of the distinction

Figure 2 shows what the formal element of Fig. 1 represents: a division of the world into what is separated (inside) and everything else (outside). The angle of Fig. 1 thus becomes mentally a circle that encloses everything that is distinguished from the rest: ‘draw a distinction’.

The angular shape in Fig. 1 therefore refers to the circle in Fig. 2, which encompasses everything that is recognised by the distinction in question.

But why does Spencer-Brown draw his elementary building block as an open angle and not as a closed circle, even though he is referring to the closedness by explicitly saying: ‘Distinction is perfect continence’, i.e. he assigns a perfect inclusion to the distinction. The fact that he nevertheless shows the continence as an open angle will become clear later, and will reveal itself to be one of Spencer-Brown’s ingenious decisions. ↝ imaginary logic value, to be discussed later.

In addition, it is possible to name the inside and the outside as the marked (m = marked) and the unmarked (u = unmarked) space and use these designations later in larger and more complex combinations of distinctions.

Fig. 3: Marked (m) and unmarked (u) space

Fig. 3: Marked (m) and unmarked (u) space

To use the building block in larger logic statements, it can now be put together in various ways.

Fig. 4: Three combined forms of differentiation

Figure 4 shows how distinctions can be combined in two ways. Either as an enumeration (serial) or as a stacking, by placing further distinctions on top of prior distinctions. Spencer-Brown works with these combinations and, being a genuine mathematician, derives his conclusions and proofs from a few axioms and canons. In this way, he builds up his own formal mathematical and logical system of rules. Its derivations and proofs need not be of urgent interest to us here, but they show how carefully and with what mathematical meticulousness Spencer-Brown develops his formalism.

The re-entry is now what leads us to the paradox. It is indeed the case that Spencer-Brown’s formalism makes it possible to draw the formalism of real paradoxes, such as the barber’s paradox, in a very simple way. The re-entry acts like a shining gemstone (sorry for the poetic expression), which takes on a wholly special function in logical networks, namely the linking of two logical levels, a basic level and its meta level.

The trick here is that the same distinction is made on both levels. That it involves the same distinction, but on two levels, and that this one distinction refers to itself, from one level to the other, from the meta-level to the basic level. This is the form of paradox.

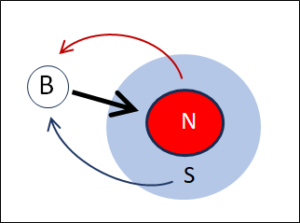

We can now notate the Barber paradox using Spencer-Brown’s form:

Fig. 5: Distinction of the men in the village who shave themselves (S) or do not shave themselves (N)

Fig. 6: Notation of Fig. 5 as perfect continence

Fig. 5 and Fig. 6 show the same operation, namely the distinction between the men in the village who shave themselves and those who do not.

So how does the barber fit in? Let’s assume he has just got up and is still unshaven. Then he belongs to the inside of the distinction, i.e. to the group of unshaven men N. No problem for him, he shaves quickly, has breakfast and then goes to work. Now he belongs to the men S who shave themselves, so he no longer has to shave. The problem only arises the next morning. Now he’s one of those men who shave themselves – so he doesn’t have to shave. Unshaven as he is now, however, he is a men he has to shave. But as soon as he shaves himself, he belongs to the group of self-shavers, so he doesn’t have to be shaven. In this manner, the barber switches from one group (S) to the other (N) and back. A typical oscillation occurs in the barber’s paradox – and in all other real paradoxes, which all oscillate.

Fig. 7: The barber (B) shaves all men who do not shave themselves (N)

Fig. 7, shows the distinction between the men N (red) and S (blue). This is the base level. Now the barber (B) enters. On a logical meta-level, it is stated that he shaves the men N, symbolised by the arrow in Fig. 7.

The paradox arises between the basic and meta level. Namely, when the question is asked whether the barber, who is also a man of the village, belongs to the set N or the set S. In other words:

→ Is B an N or an S ?

The answer to this question oscillates. If B is an N, then he shaves himself (Fig. 7). This makes him an S, so he does not shave himself. As a result of this second cognition, he becomes an N and has to shave himself. Shaving or not shaving? This is the paradox and its oscillation.

How is it created? By linking the two levels. The barber is an element of the meta-level (macro level), but at the same time an element of the base level (micro level). Barber B is an acting subject on the meta-level, but an object on the basic level. The two levels are linked by a single distinction, but B is once the subject and sees the distinction from the outside, but at the same time he is also on the base level and there he is an object of this distinction and thus labelled as N or S. Which is true? This is the oscillation, caused by the re-entry.

The re-entry is the logical core of all true paradoxes. Spencer-Brown’s achievement lies in the fact that he presents this logical form in a radically simple way and abstracts it formally to its minimal essence.

The paradox is reduced to a single distinction that is read on two levels, firstly fundamentally (B is N or S) and then as a re-entry when considering whether B shaves himself.

The paradox is created by the re-entry in addition to a negation: he shaves the men who do not shave themselves. Re-entry and negation are mandatory in order to generate a true paradox. They can be found in all genuine paradoxes, in the barber paradox, the liar paradox, the Russell paradox, etc.

Georg Spencer-Brown’s achievement is that he has reduced the paradox to its essential formal core:

→ A (single) distinction with a re-entry and a negation.

His discoveries of distinction and re-entry have far-reaching consequences with regard to logic, and far beyond.

Let’s continue the investigation, see: Form (Distinction) and Bit

Translateion: Juan Utzinger

Computer programs consist of algorithms. Algorithms are instructions on how and in what order an input is to be processed. Algorithms are nothing more than applied logic and a programmer is a practising logician.

But logic is a broad field. In a very narrow sense, logic is a part of mathematics; in a broad sense, logic is everything that has to do with thinking. These two poles show a clear contrast: The logic of mathematics is closed and well-defined, whereas the logic of thought tends to elude precise observation: How do I come to a certain thought? How do I construct my thoughts when I think? And what do I think just in this moment, when I think about my thinking? While mathematical logic works with clear concepts and rules, which are explicit and objectively describable, the logic of thinking is more difficult to grasp. Are there any rules for correct thinking, just as there are rules in mathematical logic for drawing conclusions in the right way?

When I look at the differences between mathematical logic and the logic of thought, something definitely strikes me: Thinking about my thinking defies objectivity. This is not the case in mathematics. Mathematicians try to safeguard every tiny step of thought in a way that is clear and objective and comprehensible to everyone as soon as they understand the mathematical language, regardless of who they are: the subject of the mathematician remains outside.

This is completely different with thinking. When I try to describe a thought that I have in my head, it is my personal thought, a subjective event that primarily only shows itself in my own mind and can only be expressed to a limited extent by words or mathematical formulae.

But it is precisely this resistance that I find appealing. After all, I wish to think ‘correctly’, and it is tempting to figure out how correct thinking works in the first place.

I could now take regress to mathematical logic. But the brain doesn’t work that way. In what way then? I have been working on this for many decades, in practice, concretely in the attempt to teach the computer NLP (Natural Language Processing). The aim has been to find explicit, machine-comprehensible rules for understanding texts, an understanding that is a subjective process, and – being subjective – cannot be easily brought to outside objectivity.

My computer programmes were successful, but the really interesting thing is the insights I was able to gain about thinking, or more precisely, about the logic with which we think.

My work has given me insights into the semantic space in which we think, the concepts that reside in this space and the way in which concepts move. But the most important finding concerned time in logic. I would like to go into that closer and for this target we first look at paradoxes.

Anyone who seriously engages with logic, whether professionally or out of personal interest, will sooner or later come across paradoxes. A classic paradox, for example, is the barber’s paradox:

The barber of a village is defined by the fact that he shaves all the men who do not shave themselves. Does the barber shave himself? If he does, he is one of the men who shave themselves and whom he therefore does not shave. But if he does not shave himself, he is one of the men he shaves, so he also shaves himself. As a result, he is one of the men he does not have to shave. So he doesn’t shave – and so on. That’s the paradox: if he shaves, he doesn’t shave. If he doesn’t shave, he shaves.

The same pattern can be found in other paradoxes, such as the liar paradox and many others. You might think that these kinds of paradoxes are far-fetched and don’t really play a role. But paradoxes do play a role, at least in two places: in maths and in the thought process.

Russel’s paradox has revealed the gap in set theory. Its ‘set of all sets that does not contain itself as an element’ follows the same pattern as the barber of the barber paradox and leads to the same kind of unsolvable paradox. Kurt Gödel’s two incompleteness theorems are somewhat more complex, but are ultimately based on the same pattern. Both Russel’s and Gödel’s paradoxes have far-reaching consequences in mathematics. Russel’s paradox has led to the fact that set theory can no longer be formed using sets alone, because this leads to untenable contradictions. Zermelo had therefore supplemented the sets with classes and thus gave up the perfectly closed nature of set theory.

Gödel’s incompleteness theorems, too, are ultimately based on the same pattern as the Barber paradox. Gödel had shown that every formal system (formal in the sense of the mathematicians) must contain statements that can neither be formally proven nor disproven. A hard strike for mathematics and its formal logic.

Russel’s refutation of the simple set concept and Gödel’s proof of the incompleteness of formal logic suggest that we should think more closely about paradoxes. What exactly is the logical pattern behind Russel’s and Gödel’s problems? What makes set theory and formal logic incomplete?

The question kept me occupied for a long time. Surprisingly, it turned out that paradoxes are not just annoying evils, but that it is worth using them as meaningful elements in a new formal logic. This step was exemplarily demonstrated by the mathematician Georg Spencer-Brown in his 1969 book ‘Laws of Form’, including a maximally simple formalism for logic.

I would now like to take a closer look at the structure of paradoxes, as Spencer-Brown has pointed them out, and the consequences this has for logic, physics, biology and more.

continue: Paradoxes and Logic (part2)

Translation: Juan Utzinger

Nerds like to be interested in complex topics and entropy fits in well, doesn’t it? It helps them to portray themselves as superior intellectuals. This is not your game and you might not see any practical reasons to occupy yourself with entropy. This attitude is very common and quite wrong. Entropy is not a nerdy topic, but has a fundamental impact on our lives, from elementary physics to practical everyday life.

Examples (according to W. Salm1)

So there are plenty of reasons to look into the phenomenon of entropy, which can be found everywhere in everyday life. But most people tend to avoid the term. Why is that? This is mainly due to the second preconception.

It is true, that at first glance, entropy is rather confusing. However, entropy is only difficult to understand because of persistent preconceptions (see points 4 and 5, below). These ubiquitous preconceptions are the obstacles that make the concept of entropy seem incomprehensible. Overcoming these thresholds not only helps to understand many real and practical phenomena, but also sheds light on the foundations that hold our world together.

The term entropy stems from thermodynamics. But we should not be mislead by this. In reality, entropy is something that exists everywhere in physics, chemistry, biology and also in art and music. It is a general and abstract concept and it refers directly to the structure of things and the information they contain.

Historically, the term was introduced not 200 years ago in thermodynamics and was associated with the possibility of allowing heat (energy) to flow. It helped to understand the mode of operation of machines (combustion engines, refrigerators, heat pumps, etc.). The term is still taught in schools this way.

However, thermodynamics only shows a part of what entropy is. Its general nature was only described by C.E. Shannon2 in 1948. The general form of entropy, also known as Shannon or information entropy, is the proper, i.e. the fundamental form. Heat entropy is a special case.

Through its application to heat flows in thermodynamics, entropy as heat entropy was given a concrete physical dimension, namely J/K, i.e. energy per temperature. However, this is the special case of thermodynamics, which deals with energies (heat) and temperature. If entropy is understood in a very general and abstract way, it is dimensionless, a pure number.

As the discoverer of abstract and general information entropy, Shannon gave this number a name, the “bit”. For his work as an engineer at the Bell telephone company, Shannon used the dimensionless bit to calculate the flow of information in the telephone wires. His information entropy is dimensionless and applies not only in thermodynamics, but everywhere where information and flows play a role.

Many of us learnt at school that entropy is a measure of noise and chaos. Additionally, the second law of physics tells us that entropy can only ever increase. Thus, disorder should but increase. However, identifying entropy with noise or even chaos is misleading.

There are good reasons for this misleading idea: If you throw a sugar cube into the coffee, its well-defined crystal structure dissolves, the molecules disperse disorderly in the liquid and the sugar shows a transition from ordered to disordered. This decay of order can be observed everywhere in nature. In physics, it is entropy that drives the decay of order according to the second law. And decay and chaos can hardly be equated with Shannon’s concept of information. Many scientists thought the same way and therefore equated information with negentropy (entropy with a negative sign). At first glance, this doesn’t seem to be a bad match. In this view, entropy is noise and the absence of noise, i.e. negentropy, would then be information. Actually logical, isn’t it?

Not quite, because information is contained both in the sugar cube as well as in the dissolved sugar molecules floating in the coffee. In some ways, there is even more information in the individually floating molecules because each has its own path. Their bustling movements contain information. For us coffee drinker, however, the bustling movements of the many molecules in the cup does not contain useful information and appears only chaotic. Can this chaos be information?

The problem is our conventional idea of information. Our idea is too static. I suggest that we see entropy as something that denotes a flow, namely the flow between not knowing and knowing. This dynamic is characteristic of learning, of absorbing new information.

Every second, an incredible amount of things happen in the cosmos that could be known. The information in the entire world can only increase. This is what the second law says, and what increases is entropy, not negentropy. Wouldn’t it be much more obvious to put information in parallel with entropy and not with negentropy? More entropy would then mean more information and not more chaos.

Where can the information be found? In the noise or in the absence of noise? In entropy or in negentropy?

Two Levels

Well, the dilemma can be solved. The crucial step is to accept that entropy is the tension between two states, the overview state and the detail state. The overview view does not need the details, but only sees the broad lines. C.F. Weizsäcker speaks of the macro level. The broad lines are the information that interests us. Details, on the other hand, appear to us as unimportant noise. But the details, i.e. the micro level, contain more information, usually a whole lot more, just take the movements of the water molecules in the coffee cup (micro level), whose chaotic bustle contains more information than the single indication of the temperature of the coffee (macro level). Both levels are connected and their information depends on each other in a complex way. Entropy is the difference between the two amounts of information. This is always greater at the detail level (micro level), because there is always more to know in the details than in the broad lines and therefore also more information.

But because the two levels refer to the same object, you as the observer can look at the details or the big picture. Both belong together. The gain in information about details describes the transition from the macro to the micro level, the gain in information about the overview describes the opposite direction.

So where does the real information lie? At the detailed level, where many details can be described, or at the overview level, where the information is summarised and simplified in a way that really interests us?

The answer is simple: information contains both the macro and the micro level. Entropy is the transition between the two levels and, depending on what interests us, we can make the transition in one direction or the other.

Example Coffee Cup

This is classically demonstrated in thermodynamics. The temperature of my coffee can be seen as a metric for the average kinetic energy of the individual liquid molecules in the coffee cup. The information contained in the many molecules is the micro state, the temperature is the macro state. Entropy is the knowledge that is missing in the macro state but is present in the micro state. But for me as a coffee drinker, only the knowledge of the macro state, the temperature of the coffee, is relevant. This is not present in the micro state insofar as it does not depend on the individual molecules, but rather statistically on the totality of all molecules. It is only in the macro state that knowledge about temperature becomes tangible.

For us, only the macro state shows relevant information. But there is additional information in the noise of the details. How exactly the molecules move is a lot of information, but these details don’t matter to me when I drink coffee, only their average speed determines the temperature of the coffee, which is what matters to me.

The information-rich and constantly changing microstate has a complex relationship with the simple macroinformation of temperature. The macro state also influences the micro state, because the molecules have to move within the statistical framework set by the temperature. Both pieces of information depend on each other and are objectively present in the object at the same time. What differs is the level or scope of observation. The difference in the amount of information in the two levels determines the entropy.

These conditions have been well known since Shannon2 and C.F. Weizsäcker. However, most schools still teach that entropy is a measure of noise. This is misleading. Entropy should always be understood as a delta, as a difference (distance) between the information in the overview (macro state) and the information in the details (micro state).

The fact that entropy is always a distance, a delta, i.e. mathematically a difference, also results in the fact that entropy is not an absolute value, but rather a relative value.

Example Coffee Cup

Let’s take the coffee cup as an example. How much entropy is in there? If we only look at the temperature, then the microstate corresponds to the average kinetic energy of the molecules. But the coffee cup contains even more information: How strong is the coffee? How strongly sweetened? How strong is the acidity? What flavours does it contain?

Example School Building

Salm1 gives the example of a lost door key that a teacher is looking for in a school building. If he knows which classroom the key is in, he has not yet found it. At this moment, the microstate only names the room. Where in the room is the key? Perhaps in a cupboard. In which one? At what height? In which drawer, in which box? The micro state varies depending on the depth of the request. It is a relative value.